Group 6: Erik Johnsson, Muhammad Tirmazi, Jessica Wijaya, and Ivan Zhang

GitHub Repository

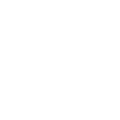

Cloud vendors, including Amazon AWS, Microsoft Azure and Google Cloud,

make their datacenter network accessible for clients by abstracting out

the complexities of the underlying interconnect. For the client, the

datacenter network is one large fabric that magically connects all of their

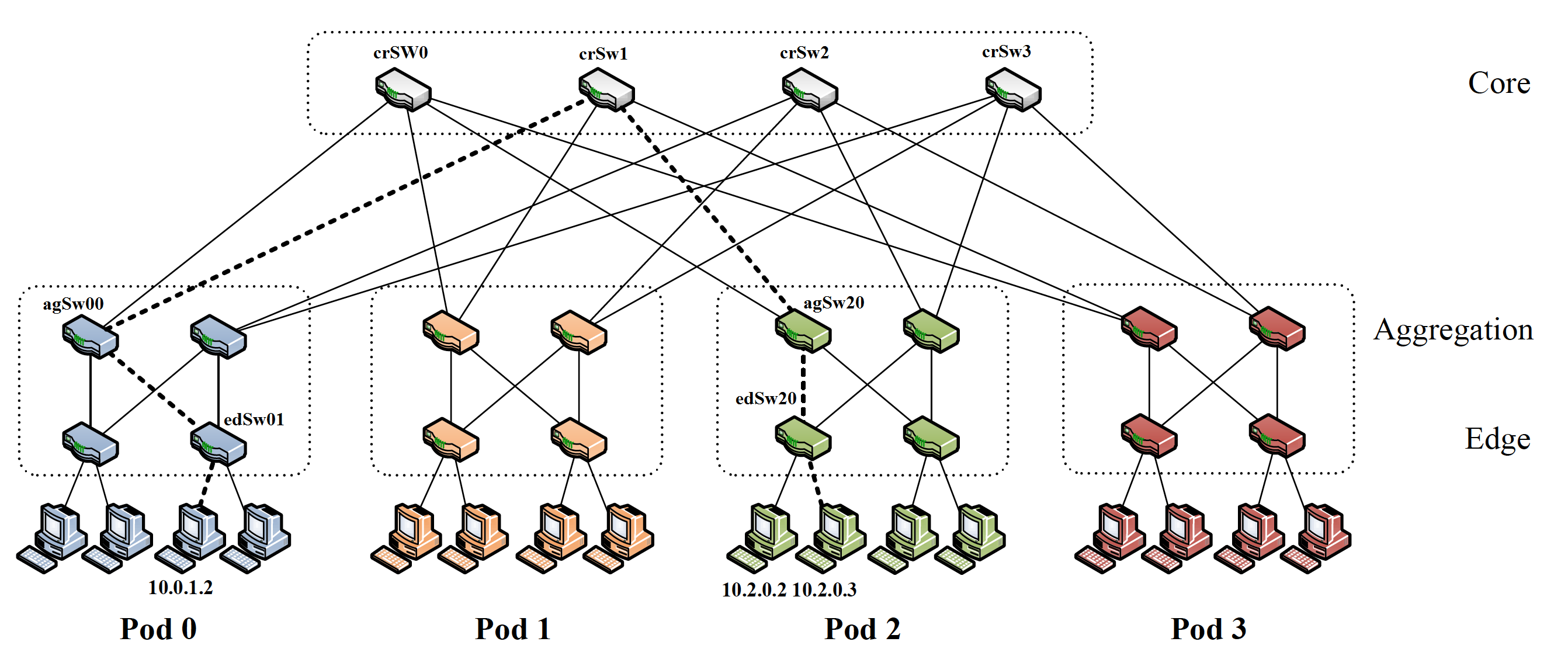

rented instances as in Figure 1.

Network simulators are usually serial. This proves to be a major issue for data center

frameworks which tend to be massive in scale with multiple highly interconnected

layers. Furthermore for large data centers, which are extremely expensive, network

issues like network congestion can result in additional high costs and service issues

if unaccounted for. Therefore these simulations are important as they help highlight issues

such as network bottlenecks in different network topologies. These simulations also help to

optimize network topologies and better balance traffic. We will use parallelization to deal

with the compute-intensive aspect of traversing network nodes (i.e. servers, switches, and links)

that comprise these simulations.

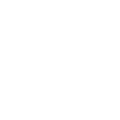

To get an intuition for why parallelizing a

network is non-trivial, consider the following naive way of dividing the problem.

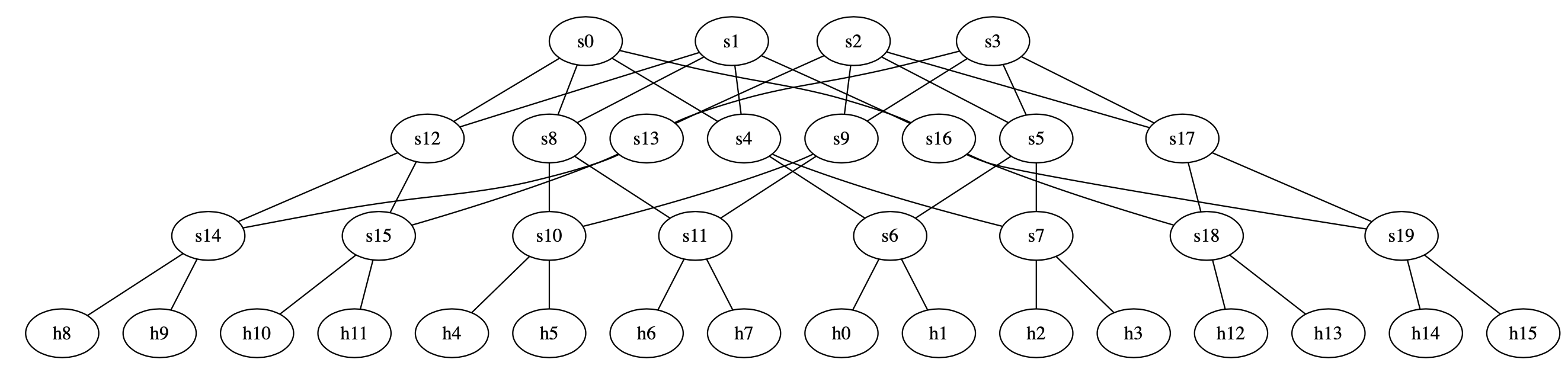

Approach 1: Simulate network devices in parallel. This involves writing a simulator for

a network switch and running parallel simulations for all the switches in figure 2 on

separate threads. The problem with this approach is that there is a dependence between

a switch and every other switch in the network. As an example, you cannot simulate the

load on any of the switches at the top of figure 2 (core) without knowing how much data

they are being sent by the switches underneath them (aggregation). The same goes for

any other set of switches one can think of.

Other approaches, such as trying to simulate network traffic in parallel, have similar pitfalls.

For our project, we used multi-processing for the purposes of traffic generation and for reading traces, traffic matrices, and network topologies. Furthermore, we used multi-threading for the execution of pruned/isolated network clusters on separate nodes. Finally shared memory is used to aggregate the results at the end as well as to share traffic information between nodes.

The design of our project consisted of 3 phases.

Phase 1: Fake traffic generation for network flow.

In order to generate fake traffic, we require user input in the form of the CDF of the traffic

as well as the interarrival times. With the CDF, we use random sampling on the inverse CDF in order

to simulate the load of the time. Finally, we use the Poisson Process in order to get the time of the

next flow.

Phase 2: Fake network creation with switches and service/time delay for simulation.

For this phase, we implemented a M/M/1 Queue that simulated the service time of the switches. This

queue is then processed in a FIFO manner. While this queue is processed, we add the link delay between

switches as the network simulation runs. This queue will run through the network topology created in

the next phase.

Phase 3: Evaluation of network topologies using simulation.

We realize that network packets don't always interact with every single part of the network. Thus we can

parallelize the network through the creation of isolated network clusters. Thus in this phase, we

prune the entire network into network clusters that are isolated from one another

(i.e. packets don't move from one network cluster to another). In this way, we can parallelize flows

through each of these network clusters and speedup the performance of the simulation.

Phase 1 and Phase 2 are combined into the "Traffic Generation" component of our project and Phase 3

is the "Network Simulation" component of our project.

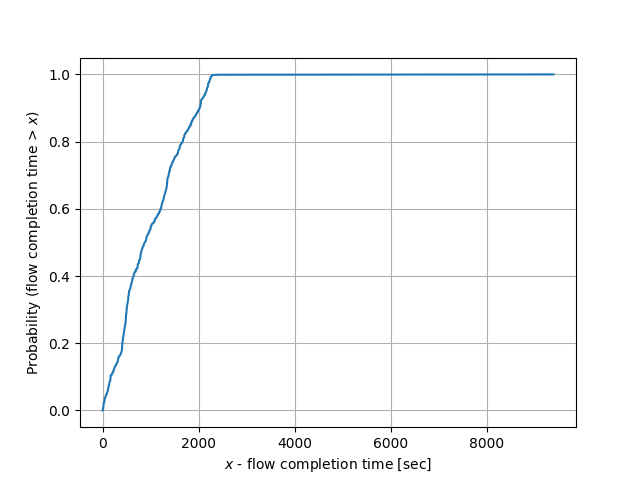

At the end of our project, we produce a flow completion time CDF like the one shown below (which was

generated from a "fork" topology of one sender and two receivers).

For our network simulation, we chose to create a fat tree topology. A graphical representation can be found below.

Examples of network flow size traces can be found publicly from

CAIDA (Center for Applied Internet Data Analysis) [4],

GitHub [5], and

Google [3].

More information on the use of the Poisson Process for network load generation can be found on

Alizadeh et. al (MIT) [1], and

Harchol-Balter et. al (Carnegie Mellon)

[2].

Machine Specifications

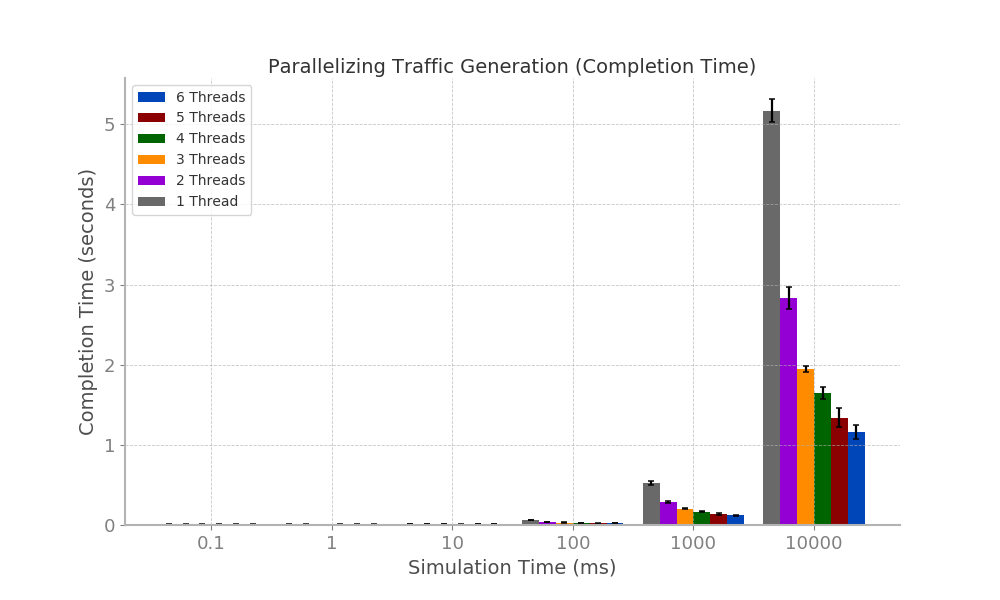

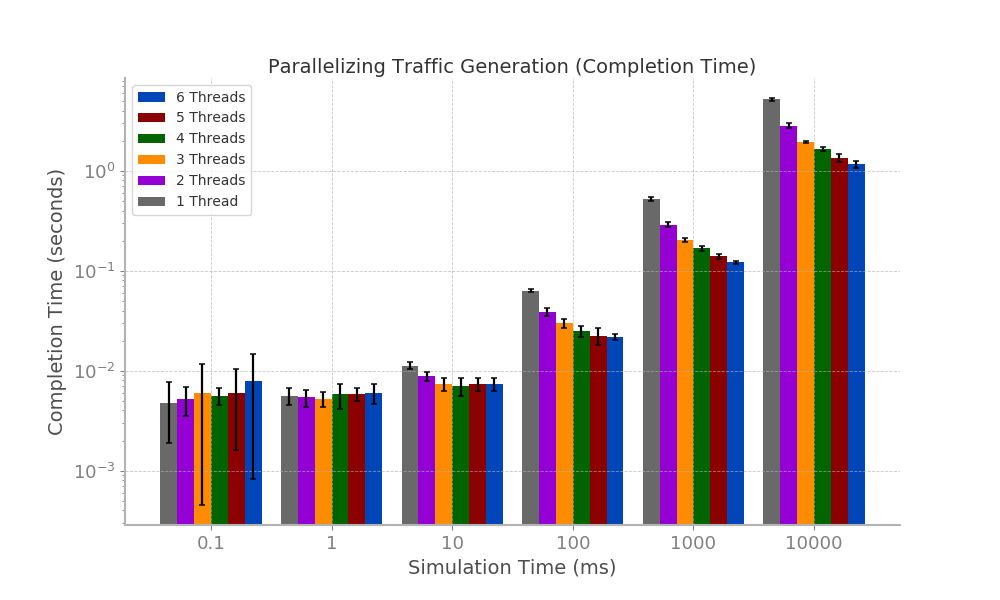

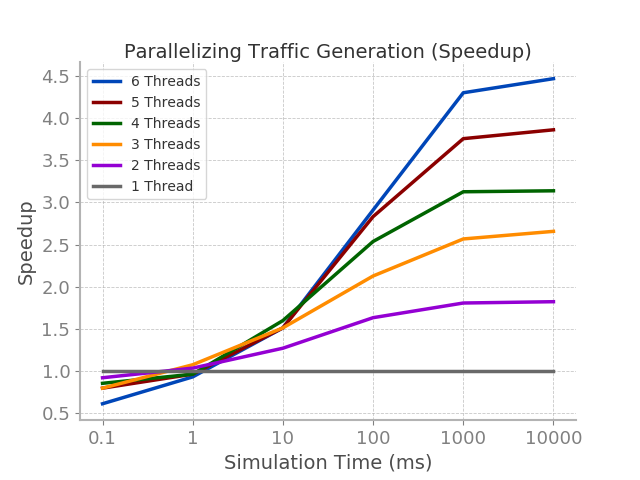

We used OpenMP to parallelize the traffic generation code on an increasing amount of threads

alongside increasing simulation times (in ms) which requires more data to be generated.

These simulation times increased in a exponential manner from 0.1 to 1 and eventually to 10000.

Bar plots for the traffic generation phases can be found below (left is on a linear scale

and right is on a logarithmic scale). Furthermore, these bar plots display the error margins.

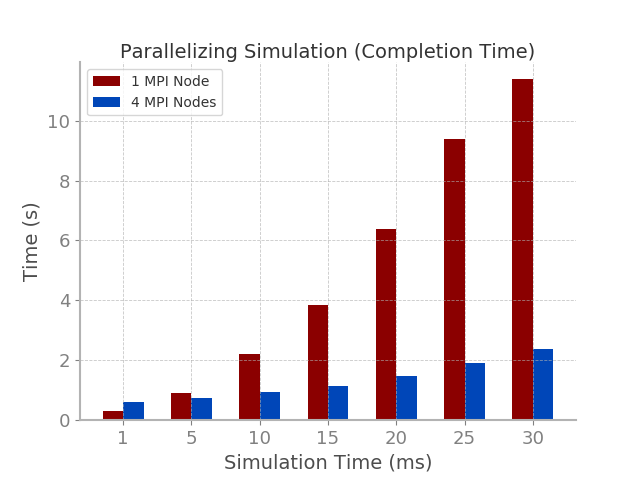

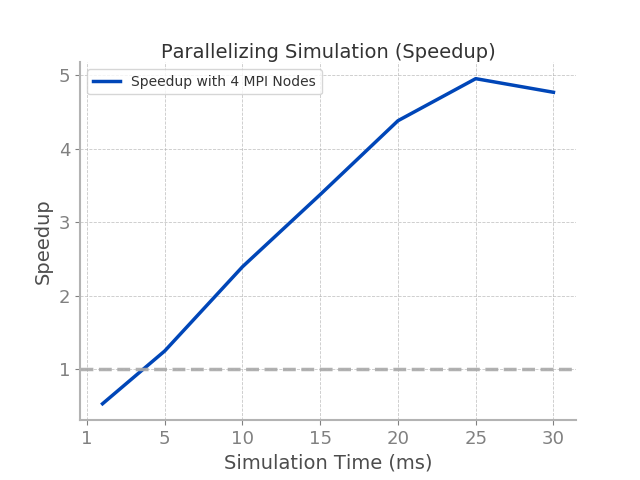

We used MPI to parallelize the network simulation code on 1 MPI node and 4 MPI nodes for increasing simulation times that were

generated in the Traffic Generation aspect of our code.

Bar plots for the network simulation phase can be found below.

The main challenges we faced were:

The main overheads of our project were:

Parallelization of our Traffic Generation code significantly benefitted it performance although the rate

of its speedup increases started to decline after simulation times over 1000 ms. It should be noted, however, that

parallelization only helped Traffic Generations of simulation times over 100 ms due to the added overheads that

comes with parallelization. Thus, we suggest that parallelization by increasing threads with OpenMP

is a viable way of generating the large traffic flows needed for network simulations. One caveat, however,

is that speedup starts to remain constant after simulation times of 1000 ms which could be addressed with more threads

or other improvements in the code (see Future Work below).

Parallelization of our Network Simulation with just 4 MPI nodes resulted in massive improvements in performance,

although speedup started to decline after simulation times of 30 ms. It should also be noted that (like the parallelization

of the traffic generation), parallelization provided little benefits and was, at times, detrimental to completion

time for simulation times at or below 5 ms. Thus, we suggest that parallelization through the use of MPI nodes is viable

way to reduce the completion time of large network simulations. The benefits/speedups of this parallelization

could be potentially greater with the use of more MPI nodes (see Future Work below).

In the future, we would like to investigate whether an increasing number of MPI nodes will further speedup the simulation. Furthermore, we would like to look into the posibility of GPU computing as a way of improving our traffic generation as well as network simulation code. Additionally, we can find methods to deal with overheads that hinder the speed of some aspects of our project.